Evidence-driven Multidimensional Uncertainty Disentanglement

By precisely disentangling multiple sources of uncertainty arising from data, conflict, models, and distributions, this direction provides reliable quantitative measures for assessing whether a model “knows what it does not know”, enabling a critical transition from mere prediction to proactive risk awareness.

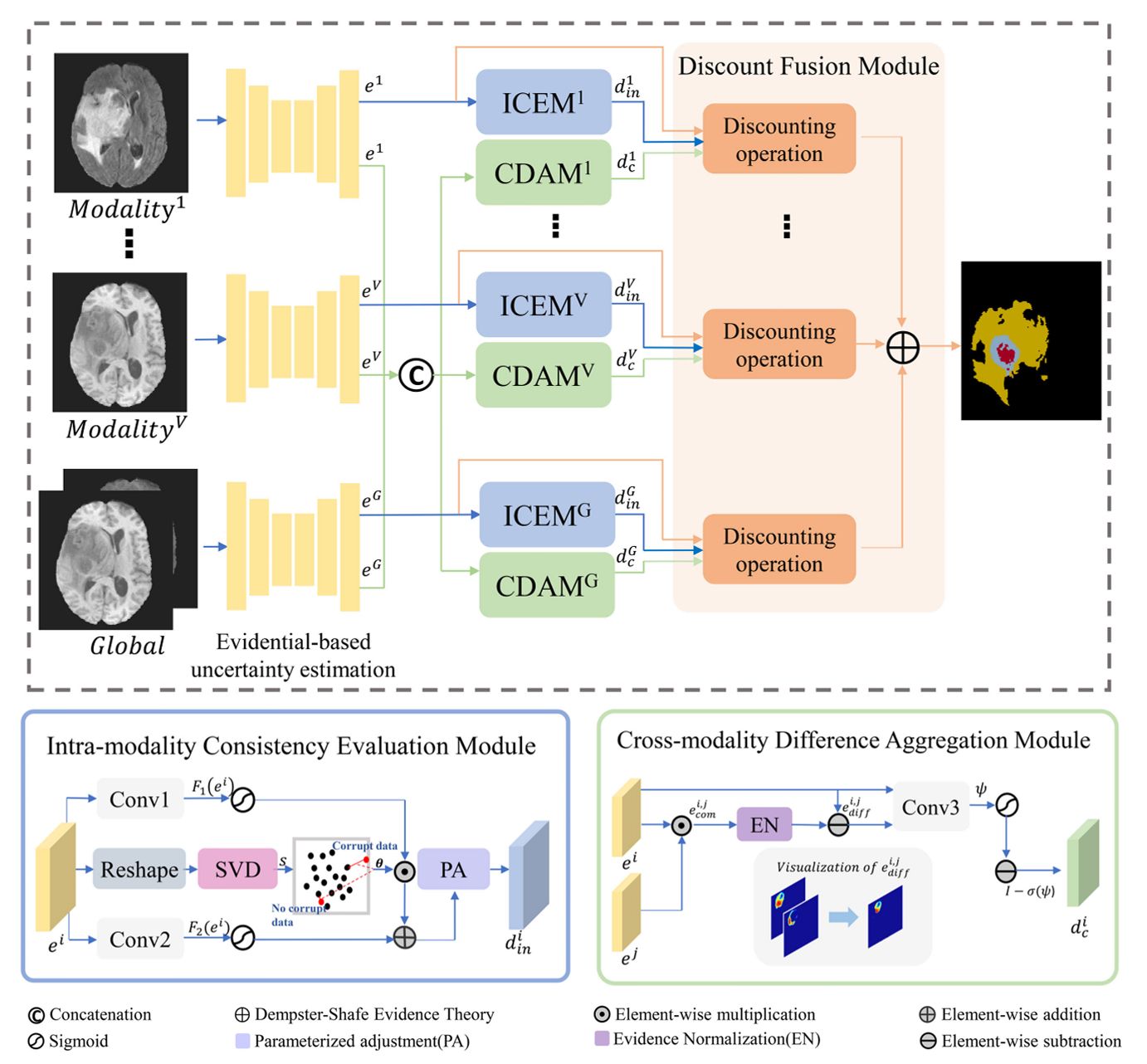

Conflict Quantification

Focuses on measuring inconsistency among multiple evidence sources to identify unreliable or contradictory predictions.

Conflict Quantification

Focuses on measuring inconsistency among multiple evidence sources to identify unreliable or contradictory predictions.

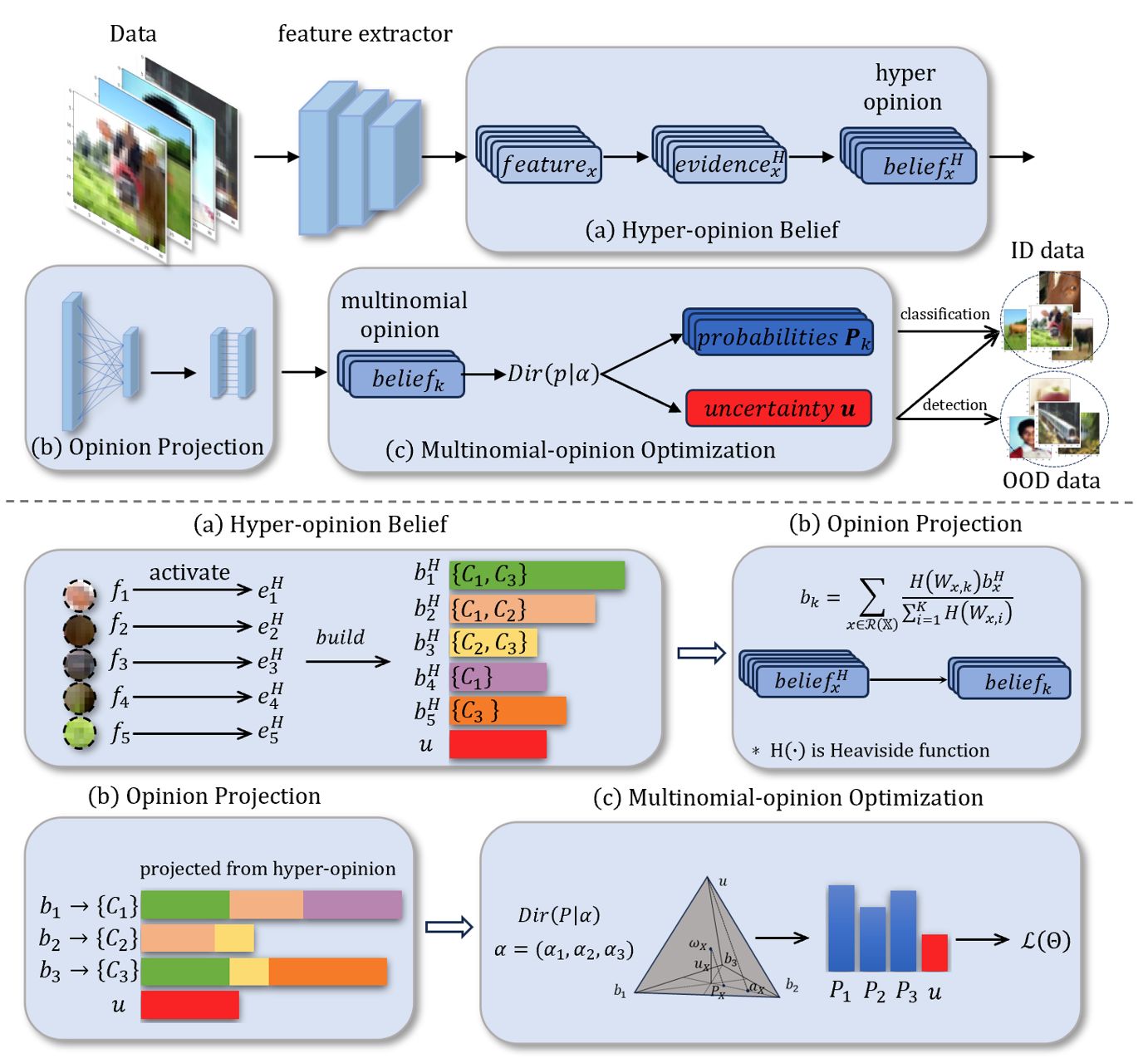

Ambiguity Quantification

Focuses on intrinsic uncertainty caused by class overlap and data vagueness, enabling fine-grained modeling of predictive ambiguity.

Ambiguity Quantification

Focuses on intrinsic uncertainty caused by class overlap and data vagueness, enabling fine-grained modeling of predictive ambiguity.

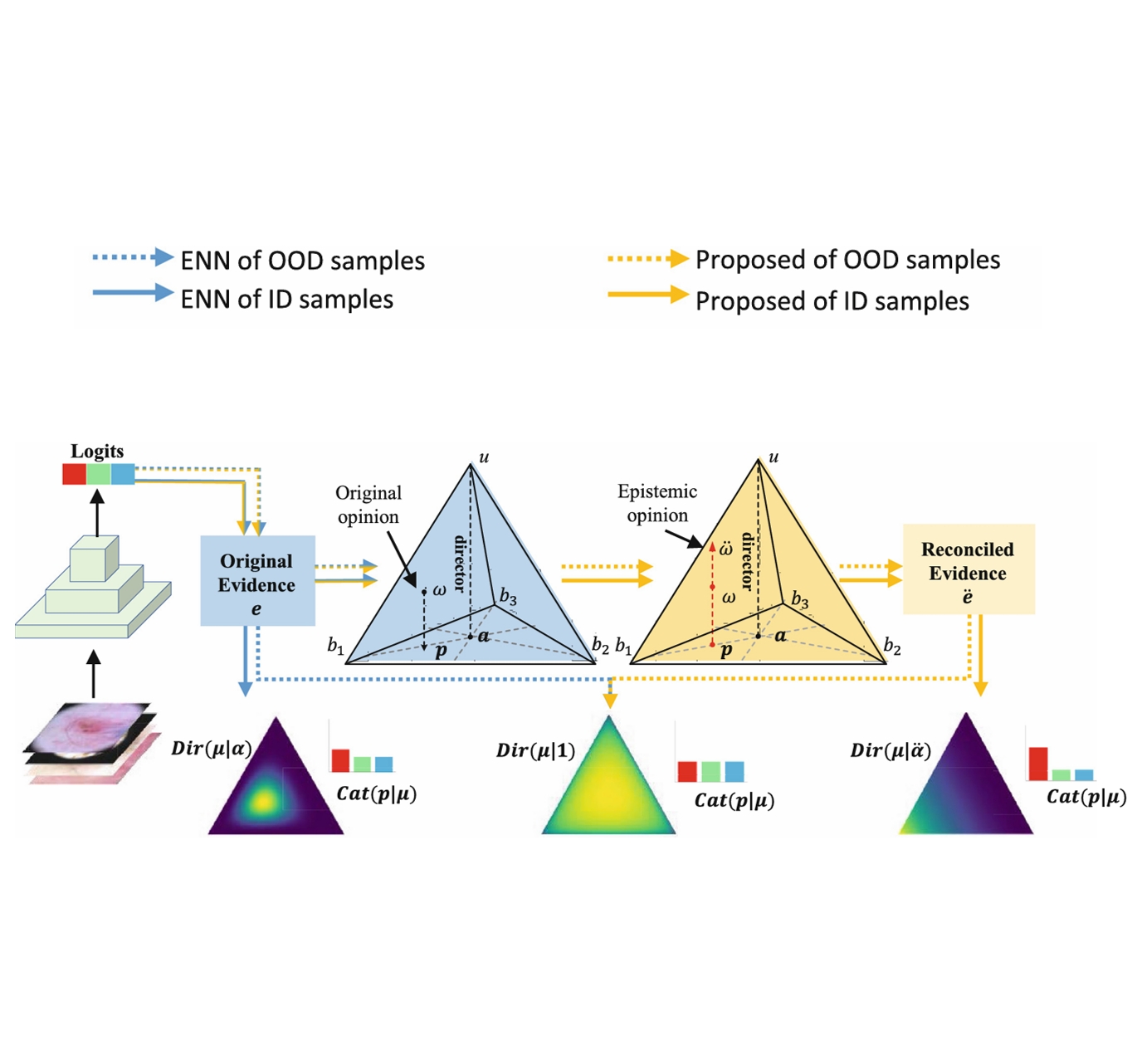

Unknownness Quantification

Focuses on uncertainty arising from distribution shifts and out-of-distribution inputs, supporting reliable detection of unknown or unseen cases.

Unknownness Quantification

Focuses on uncertainty arising from distribution shifts and out-of-distribution inputs, supporting reliable detection of unknown or unseen cases.